Here’s a shocker. It turns out that most of today’s biggest television companies, which have been pushing for 8K adoption, are selling display technologies that may not be beneficial to the consumer.

Warner Bros. recently ran a double-blind study and found that most of the its participants couldn’t tell much of a difference between 8K UHD and 4K UHD. These refer to resolutions of 7680 x 4320 and 3840 x 2160, respectively.

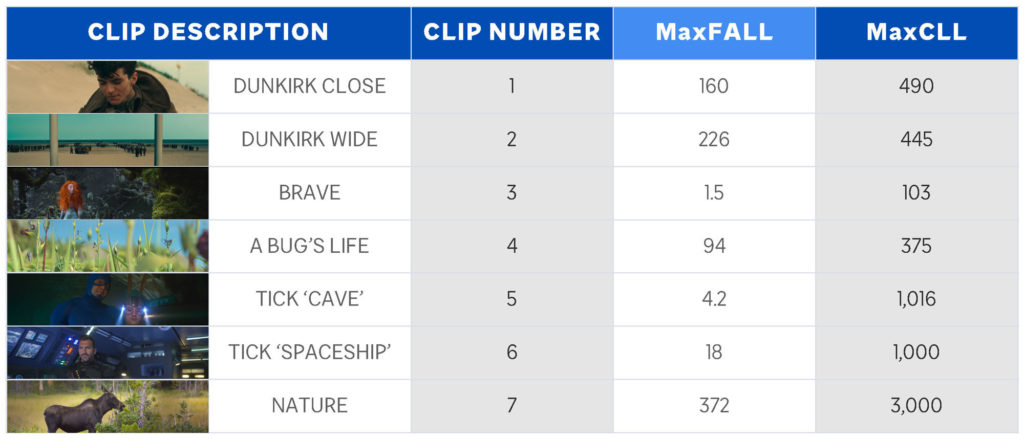

The double-blind study utilized clips from three features (Dunkirk, Brave, A Bug’s Life), one live-action series (The Tick), and nature footage by Stacey Spears. All of these were shot in 8K resolution and encoded in HDR10.

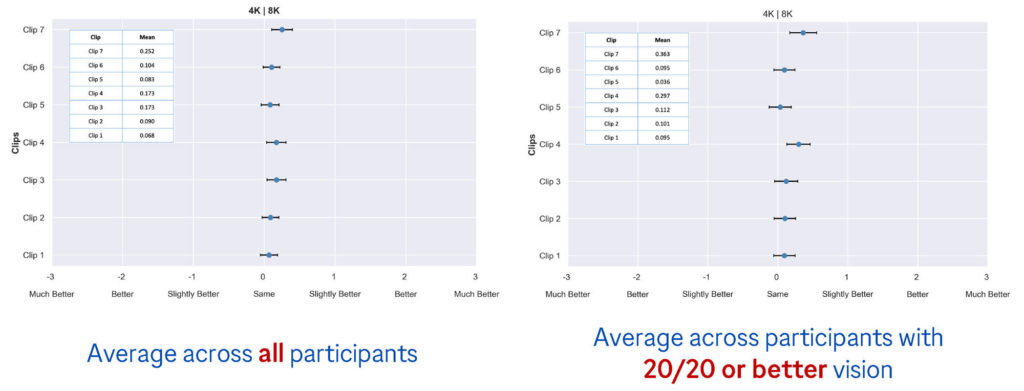

Few of the 139 participants could spot differences between the original 8K clips and 4K versions when they were played back in alternating sequences on an LG 88Z9 88-inch 8K OLED TV. (The 4K clips were downscaled and upscaled using industry-standard post-production software.)

The lack of visual acuity was not a factor, as each participant had their vision checked before testing began. Most of them had perfect or very good eyesight: 27 percent had better than 20/20 vision, 34 percent had 20/20 vision, while 39 percent had vision that averaged 20/25 or 20/30.

Only the participants with 20/10 acuity sitting in the front row saw differences in two clips, A Bug’s Life and Spears’s nature footage. Still, the 8K clips were only described as “slightly better” than 4K.

What’s really surprising is that many thought the 4K footage was better. Michael Zink, VP of Technology at Warner Bros., chalked this up to guesswork. “I believe the reason you see a large number of people rating ‘4K better than 8K’ is that they really can’t see a difference and are simply guessing,” Zink said.