During today’s Financial Analysts Day 2020 event, Radeon Technologies Group SVP David Wang had some pretty cool stuff to share regarding AMD’s next-generation GPU architecture, RDNA 2.

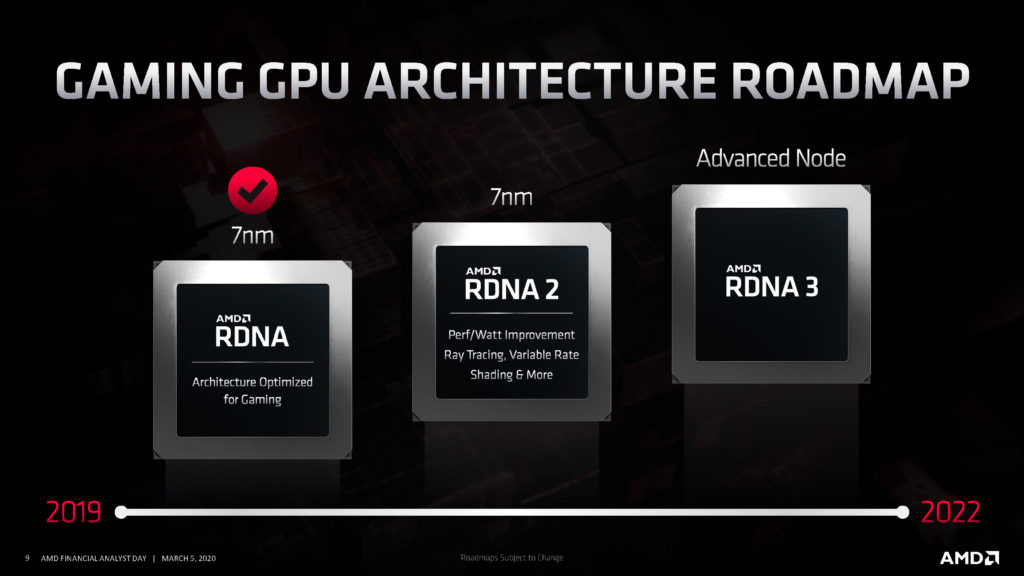

RDNA 2 is what will power AMD’s upcoming lineup of Radeon products, as well as next-generation consoles such as the Xbox Series X. While we don’t know the full story behind the PlayStation 5 yet, Microsoft recently confirmed that the Series X utilizes a “custom designed processor leveraging AMD’s latest Zen 2 and RDNA 2 architectures.”

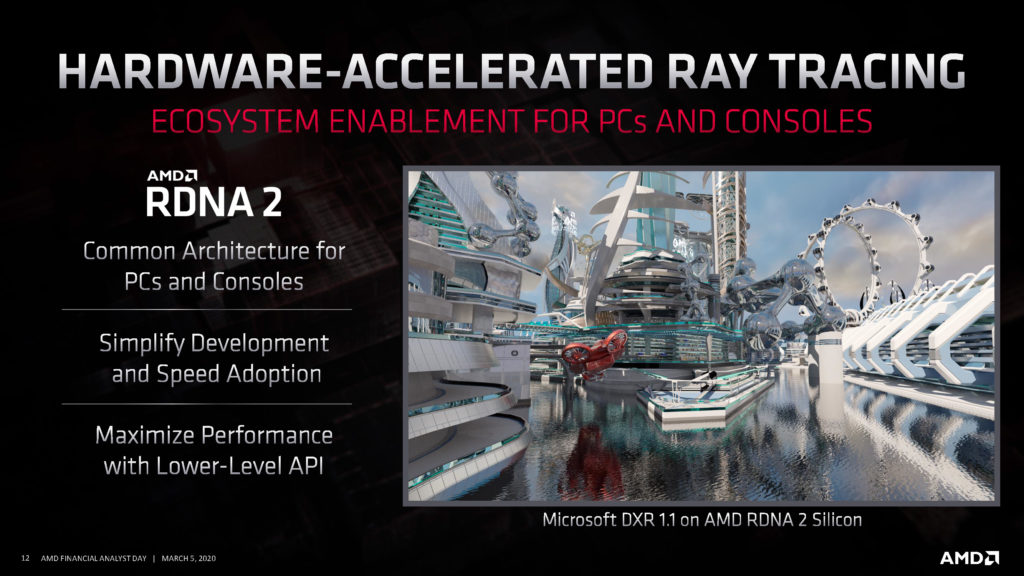

Thanks to RDNA 2, variable rate shading and hardware-accelerated DirectX ray tracing will be supported on the Xbox Series X and future Radeon cards. But what kind of general performance improvements should gamers expect?

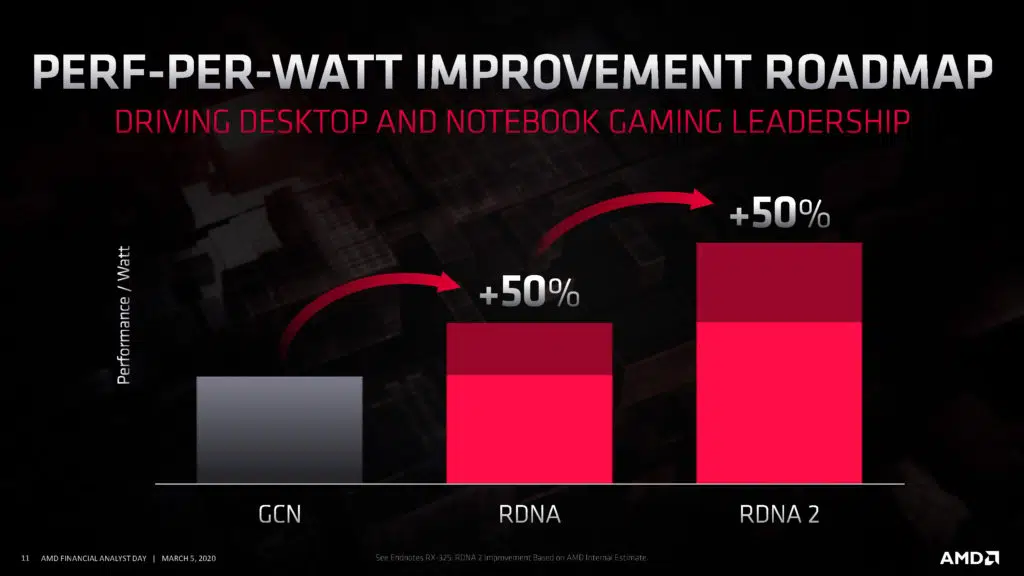

According to one slide that Wang shared, RDNA 2 provides a 50-percent performance-per-watt improvement over its predecessor. This is just an “internal estimate,” but the new architecture should prove to be a sizable jump.

A roadmap of the RDNA architecture was also presented. It confirms some of the major features that RDNA 2 would support – e.g., ray tracing, variable-rate shading – but what’s really interesting is the mention of RDNA 3, which AMD expects to introduce by 2022. Based on the company’s work so far, another 50 percent improvement in perf-per-watt could be in order.

RDNA 2 might ultimately serve as a turning point for ray-tracing adoption, as the architecture will be at the full disposal of both PC and console developers. AMD says they’ll have more to share about it soon.