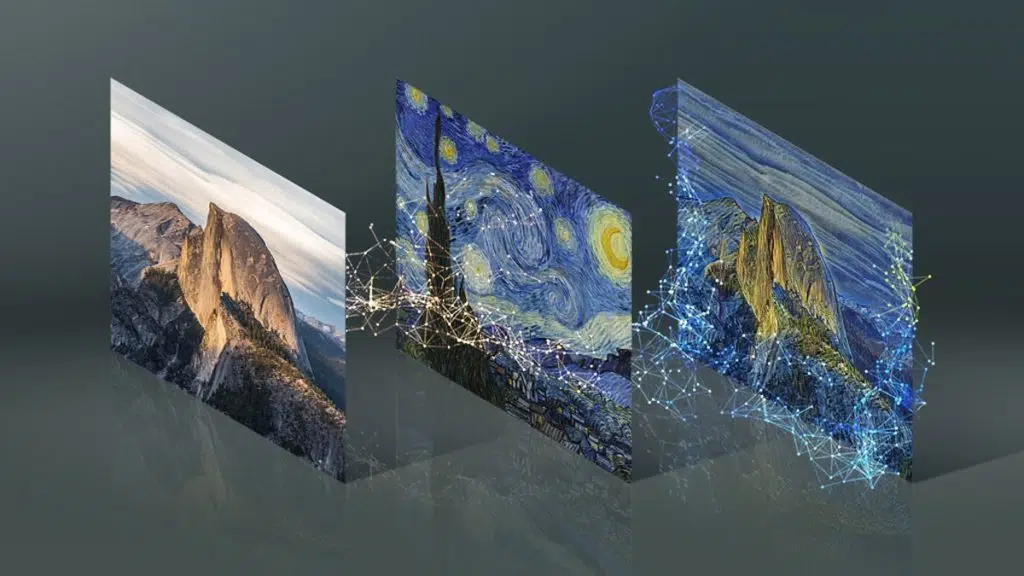

NVIDIA has shared a new application called GauGAN2 that’s smart enough to translate words and sentences into what the company describes as “photorealistic masterpieces.” Available now via NVIDIA’s AI Demo page, users can generate anything from sunsets to snowcapped mountains simply by typing a phrase and pressing a button. GauGAN2’s magic is enabled by a deep learning model.

From NVIDIA:

The AI model behind GauGAN2 was trained on 10 million high-quality landscape images using the NVIDIA Selene supercomputer, an NVIDIA DGX SuperPOD system that’s among the world’s 10 most powerful supercomputers. The researchers used a neural network that learns the connection between words and the visuals they correspond to like “winter,” “foggy” or “rainbow.”

Compared to state-of-the-art models specifically for text-to-image or segmentation map-to-image applications, the neural network behind GauGAN2 produces a greater variety and higher quality of images.

NVIDIA points out that GauGAN2 users who are Star Wars fans can go so far as to recreate the iconic twin suns of Tatooine just by entering the phrase “desert hills sun.” A second sun can then be sketched from there.

Source: NVIDIA