Intel Chief Performance Strategist Ryan Shrout has shared a video showcasing insanely fast PCIe 5.0 SSD transfer speeds using an Alder Lake processor. The demonstration had been intended for CES 2022, but he released a teaser due to Intel switching over to a virtual presence.

Perks of the job! Was going to save this demo for #CES2022 but with that off the table, why not just share it with everyone right now?! Here’s a 12th Gen @intel Core i9-12900K system paired with a new @Samsung PM1743 PCIe 5.0 SSD getting over 13GB/s!! pic.twitter.com/oyL08KzDtV

— Ryan Shrout (@ryanshrout) December 30, 2021

A pair of Samsung PM1743 PCIe 5.0 enterprise SSDs are used in a system with Core i9-12900K and ASUS motherboard with 32 GB of memory. With custom card adapters, a single drive hit a read speed of 13.8 GB/s, double the speed of the OS drive on PCIe 4.0. The write speed is said to be 6.6 GB/s.

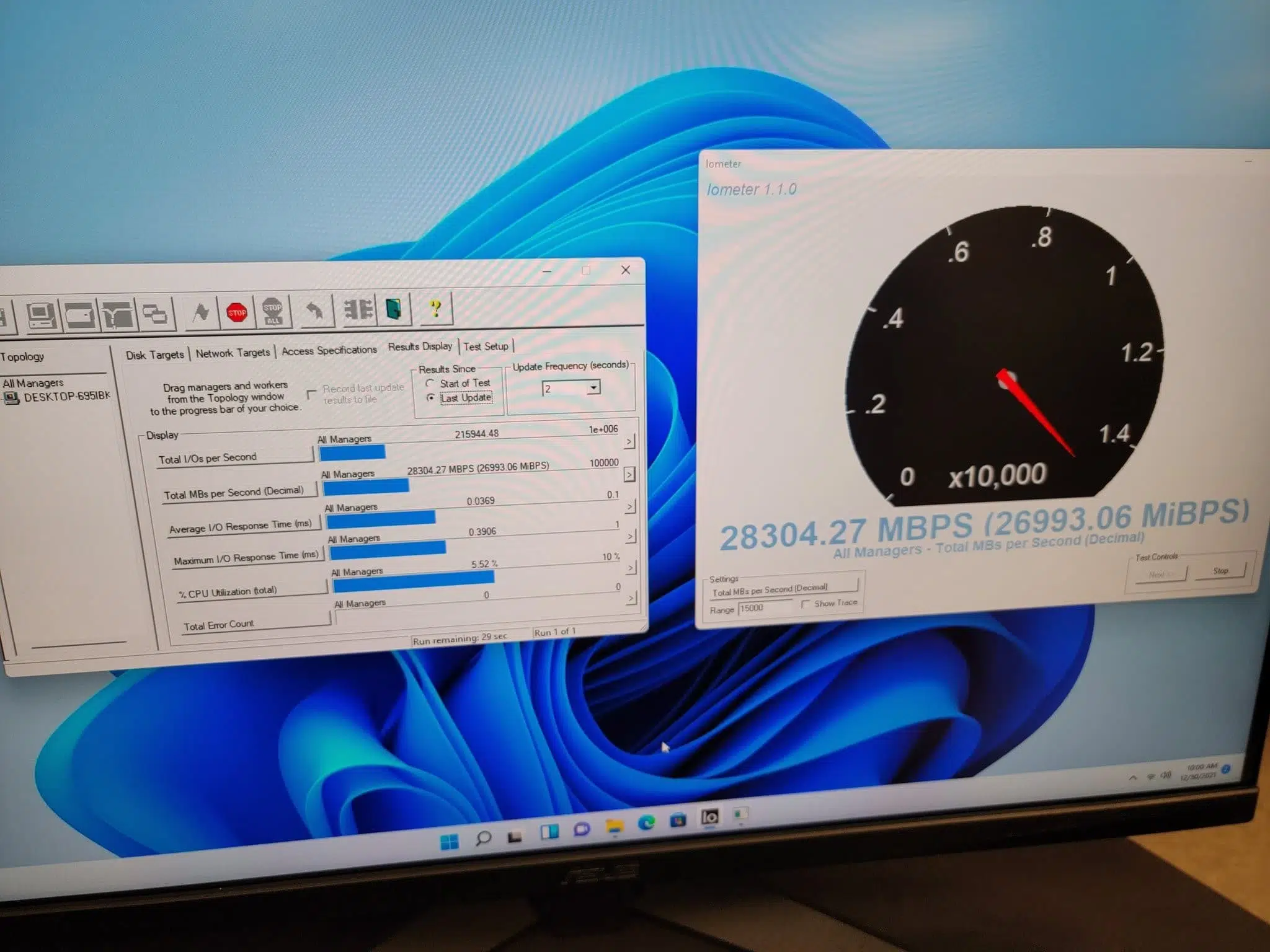

He also experimented with running the pair of drives independently together. This required disabling the EVGA GeForce RTX 3080 GPU to free up the PCIe 5.0 lanes needed for the test. Using IOmeter, the read speeds peaked at just over 28 GB/s.

Source: Ryan Shrout (via VideoCardz)