Tired of getting cussed out by 14-year-olds in the latest Call of Duty? That could be a thing of the past thanks to GGWP, an artificial intelligence-powered moderation platform being developed by a group of tech veterans that aims to combat toxicity in video games and promote more positive play. Bitkraft Ventures led a $12 million seed round for the platform, which derives its name from the phrase “Good Game, Well Played.” Publishers can use GGWP to customize a moderation system to catch, review, and respond to reported incidents, while a report management system also provides context for incidents and assesses their severity, as well as presenting historical player data.

“There’s a lot of research that shows bad behavior is bad for business,” said Dennis Fong, CEO of GGWP, in a statement. “Twenty-two percent of players have quit playing a game due to toxicity. With GGWP, we are modernizing game moderation. With the ability to respond at scale, we can dramatically improve game experiences, and in turn improve game businesses.”

“GGWP has an effective and easy to use platform powered by incredibly sophisticated technology,” added Jens Hilger of Bitkraft Ventures. “The games industry desperately needs GGWP’s AI based moderation, and if you consider the fact that we all increasingly live online, social media and other online spaces will need solutions for toxicity in our digital societies.”

GGWP is an AI system that tracks and fights in-game toxicity (Engadget) (via The Hollywood Reporter)

It feels like the report button often sends complaints directly into a trash can, which is then set on fire quarterly by the one-person moderation department. According to legendary Quake and Doom esports pro Dennis Fong (better known as Thresh), that’s not far from the truth at many AAA studios.

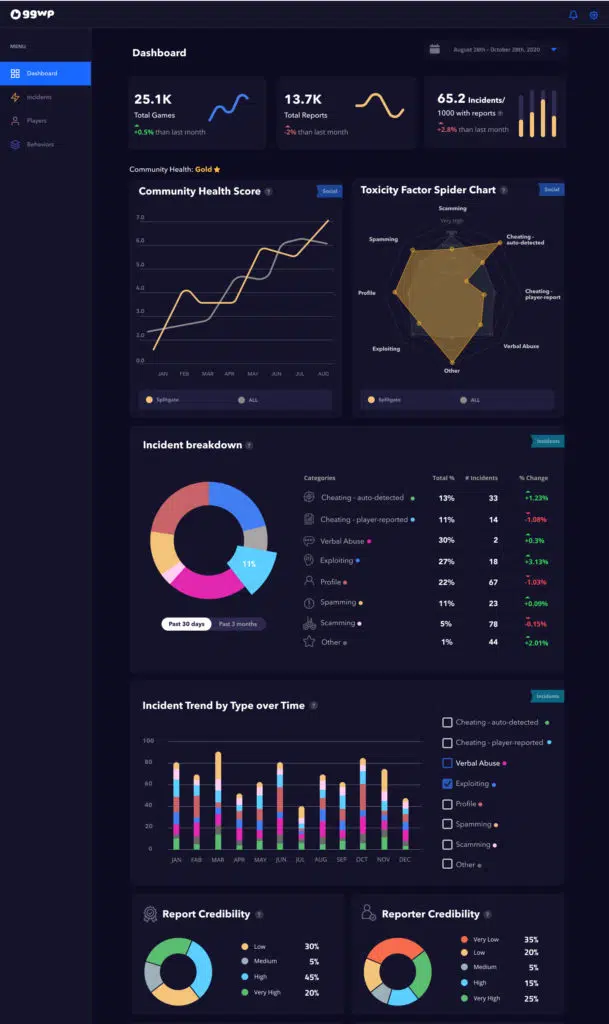

This week he announced GGWP, an AI-powered system that collects and organizes player-behavior data in any game, allowing developers to address every incoming report with a mix of automated responses and real-person reviews. Once it’s introduced to a game — “Literally it’s like a line of code,” Fong said — the GGWP API aggregates player data to generate a community health score and break down the types of toxicity common to that title. After all, every game is a gross snowflake when it comes to in-chat abuse.

The system can also assign reputation scores to individual players, based on an AI-led analysis of reported matches and a complex understanding of each game’s culture. Developers can then assign responses to certain reputation scores and even specific behaviors, warning players about a dip in their ratings or just breaking out the ban hammer. The system is fully customizable, allowing a title like Call of Duty: Warzone to have different rules than, say, Roblox.