During today’s Intel Architecture Day 2021 event, Intel shared a short video demonstrating how its new high-quality super sampling technology, XeSS, might compare to NVIDIA and AMD’s respective DLSS and FidelityFX Super Resolution technologies for enabling higher resolutions at a lower performance cost. It seems impressive.

As indicated in the video’s comparison sequences, Intel’s XeSS super sampling technology is able to upscale a 1080p image to 4K with what appears to be minimal quality loss. Intel’s head of graphics software Lisa Pearce goes a little further, boldly claiming that there is “no visible quality loss” at all in the 1080p image compared to its native 4K counterpart.

Intel XeSS’ results are made possible by deep learning. “XeSS uses deep learning to synthesize images that are very close to the quality of native high-res rendering,” Intel explained, noting that the reconstruction is performed by a “neural network trained to deliver high performance and great quality.”

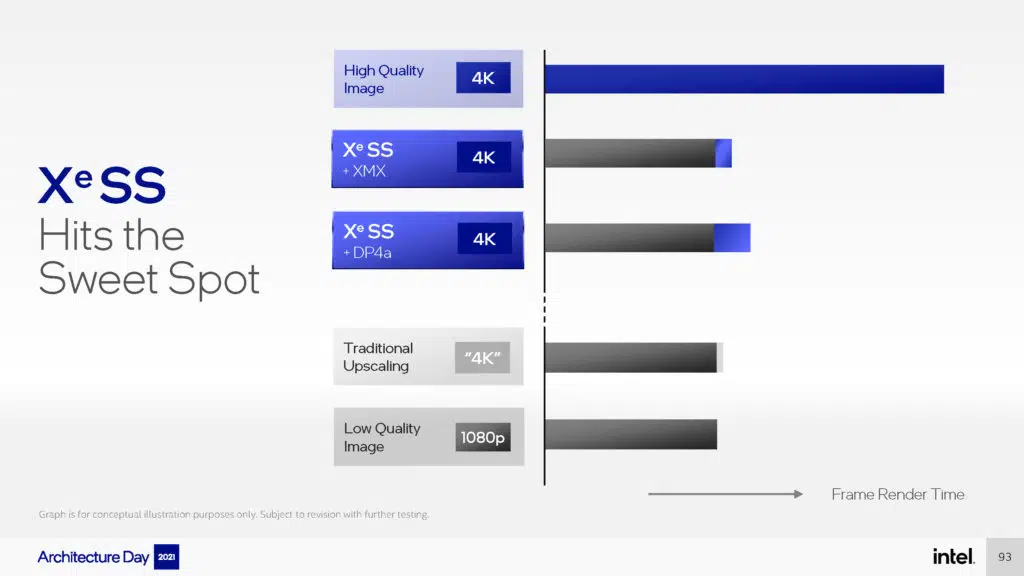

Pearce added that the cost of Intel XeSS is “relatively small” and that the AI-assisted scaling allows for performance boosts of up to 2x. Intel’s XeSS SDK will be available this month.

The contents and game levels shown in this demo were created by Rens. Rens is a 3D artist, environment artist and technical art director. He is known for his outstanding photogrammetry techniques and high-end rendering skills, and has worked with top game development studios like DICE, Epic Games and Sony.

Source: Intel