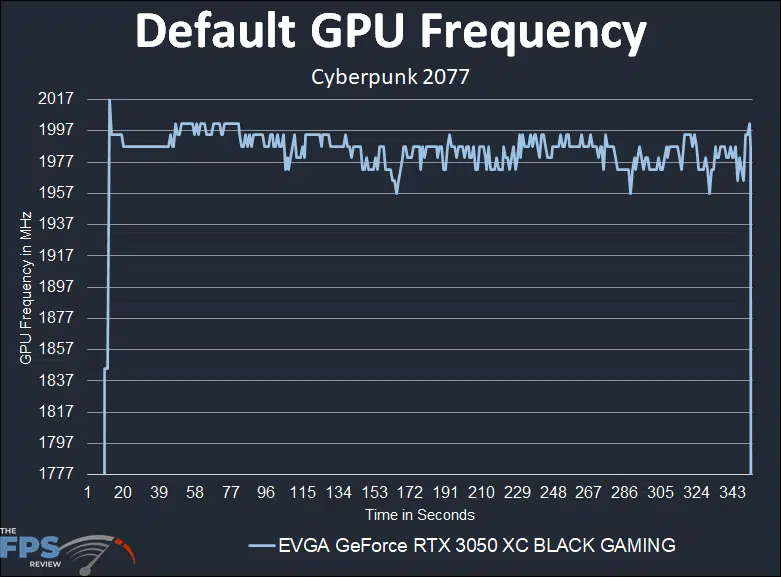

Default GPU Frequency

Before we look at performance, we need to find out the actual real-world gaming frequency the video card performs. With both NVIDIA and AMD GPUs today, the GPU frequency is very dynamic. What may be quoted as the “Boost Clock” is not necessarily the performance it will actually run at. Typically, GPUs today can exceed the “Boost Clock” dynamically. We need to find out what it actually runs at, in this way we can see how well things like cooling, and power headroom are working.

To do this we will record the GPU clock frequency over time while playing a game. We use Cyberpunk 2077 for this with a very long manual run-through at “Ultra” settings. We also record GPU-Z sensor data to look at GPU temperature, Voltage and Power.

As we mentioned on page 2, EVGA accidentally applied the wrong BIOS on this particular model of the 3050 XC. It’s supposed to have a GPU Boost of 1777MHz, but instead, the higher 1845MHz GPU Boost from the higher clocked 3050 XC tier video card EVGA offers is applied. Therefore, our card represents the higher clocked 3050 XC tier video card. GPU Boost would still drive the frequency well above 1777MHz anyway.

In the graph above, we have set the bottom line at 1777MHz. You can see here that NVIDIA GPU Boost is boosting the frequency up quite a bit while gaming. It starts off above 1997MHz but falls between 1977-1987MHz mostly while gaming. We theorize that the 1777MHz version of this card would boost to around 1900MHz+. Compared to the 1845MHz boost that is about 7-8% higher, and compared to the 1777MHz boost that is about a 12% boost. That’s a very high clock speed to start with over the boost clock, so it will be interesting to see how much headroom it has for overclocking.

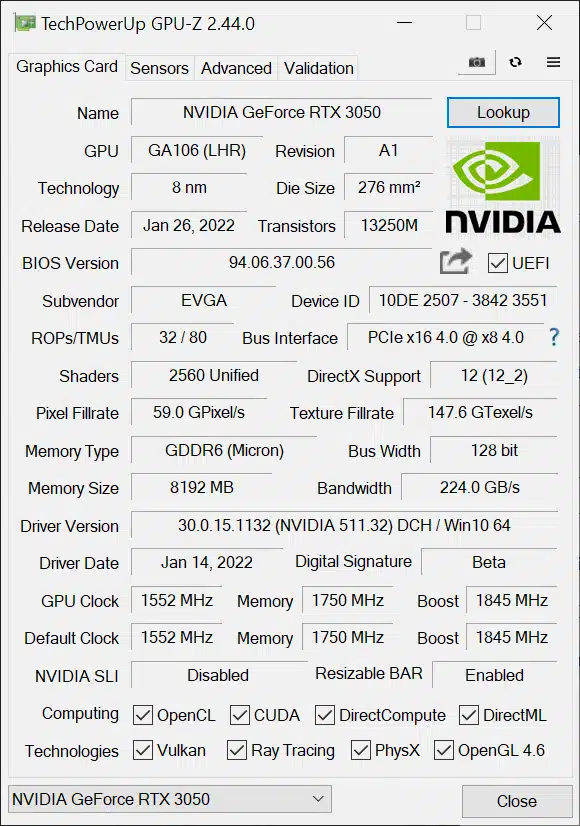

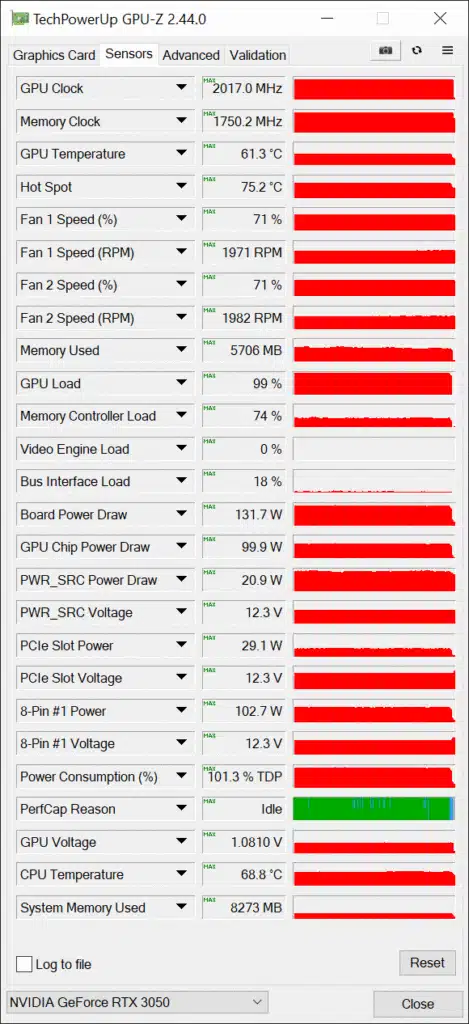

GPUz

According to GPUz the GPU Temperature was 61.3c and Hot Spot was 75.2c at 71% fan speeds, so the fans were kicking pretty hard, but they were still very quiet. Board Power Draw was at 131.7W and GPU Chip Power Draw at 99.9W. The GPU Voltage was 1.0810V.

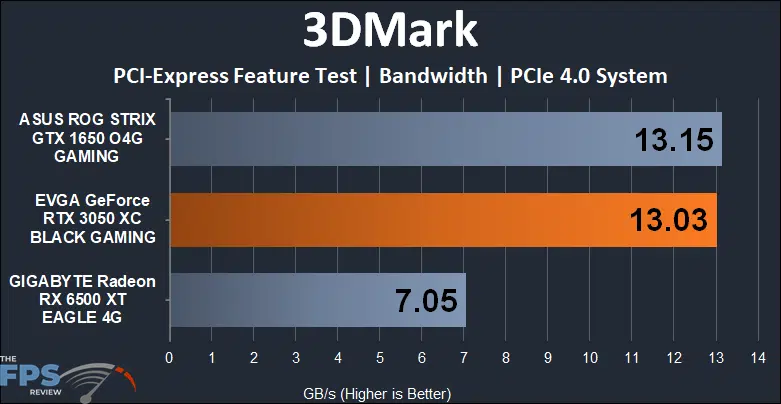

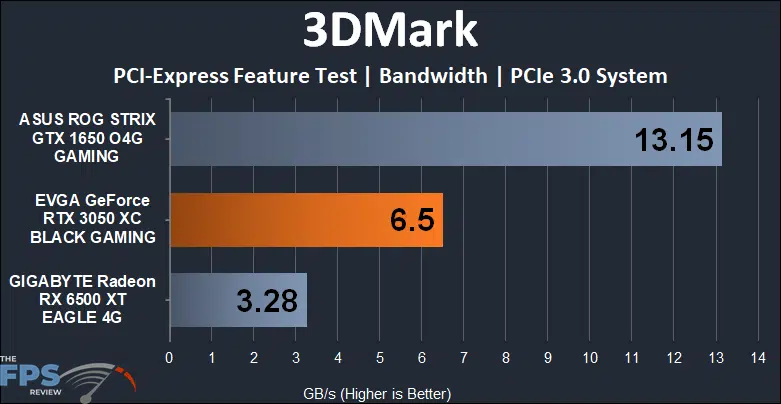

PCI-Express Bandwidth

We need to test PCI-Express Bandwidth to show how this compares to the Radeon RX 6500 XT, which is constrained on PCI-Express 3.0 system platforms.

When running on a PCIe Gen 4 platform the GeForce RTX 3050 matches the GeForce GTX 1650 PCI-Express Bandwidth. The GeForce GTX 1650 runs on a PCIe Gen 3 x16 interface, while the GeForce RTX 3050 runs on a PCIe Gen 4 x8 interface, so they have the same performance. The Radeon RX 6500 XT however, only runs on a PCIe 4.0 x4 interface and thus has half the bandwidth.

When we force PCIe Gen 3 on our motherboard we see the bandwidth go down on the GeForce RTX 3050 because it is x8 it is now PCIe Gen 3 x8, but the Radeon RX 6500 XT suffers the worse. Because it is x4 it is now PCIe Gen 3 x4, and just has horrible bandwidth on PCIe Gen 3 platforms, it will be constrained and bottlenecked whereas the RTX 3050 will not.

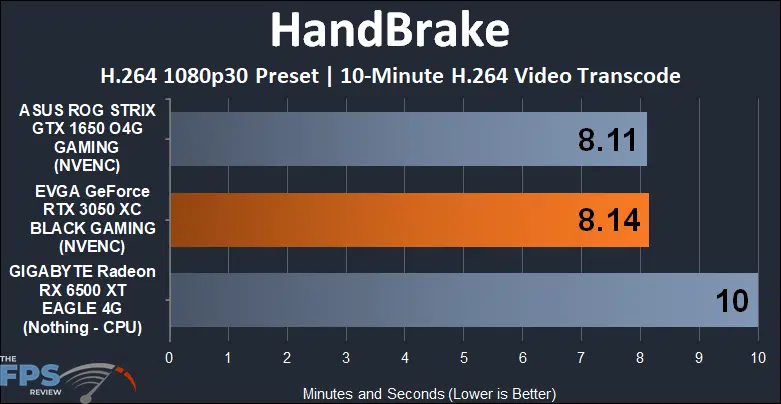

Video Encoding Performance

Another missing feature in the Radeon RX 6500 XT is the video encoding engine. This means you cannot hardware accelerate video encoding with the GPU, it simply will not help you encode video. In addition, it lacks AV1 decode. We can demonstrate how the encoding engine helps video transcoding performance.

The GeForce RTX 3050 gives us hardware encoding and thus our video is transcoded much faster than the Radeon RX 6500 XT which has to rely on the CPU only.