The FPS Review: Quick Compare

Top-of the line. Cream of the crop. NVIDIA’s RTX 3090 versus AMD’s RX 6900 XT. Where do these two high-end graphics cards stand in comparison to each other? Both launched late in 2020, though the 6900 XT came a few months later in December than the 3090’s launch in September. Despite both being the most advanced card the respective companies are offering, the MSRP of the 6900 XT was initially significantly cheaper than the RTX 3090. $999 for the AMD card and $1,499 for the NVIDIA.

Interestingly, this means the 6900 XT’s MSRP is closer to the price of NVIDIA’s 3080 and 3080 TI models.

So what, if anything, is the 6900 XT lacking when stacked up head-to-head with the 3090? Let’s try to parse these differences and get a better idea of how they compare. If you’d like to take a longer look at these cards separately, check out our in-depth reviews of the 6900 XT and the RTX 3090.

AMD Radeon RX 6900 XT and NVIDIA RTX 3090.

| Specification | GeForce RTX 3090 | RX 6900 XT |

|---|---|---|

| Architecture | Ampere | RDNA 2 |

| Process Node | SAMSUNG 8N | TSMC 7N |

| GPU Cores | 10496 CUDA cores | 5120 Stream processors |

| Tensor Cores | 328 | N/A |

| Ray Tracing Cores | 82 | 80 |

| Boost Clock | 1695MHz | 2250MHz |

| Memory | 24GB GDDR6X | 16GB + 128MB Infinity Cache |

| Memory Clock | 19.5GHz | 16GHz |

| TDP/TBP | 350W | 300W |

| MSRP | $1499 | $999 |

Right off the bat, we see a massive difference in core counts. This is partially due to the generally more powerful nature of the 3090, thus the increased price tag, but this big of a gap also really shows the difference in architectural philosophy of each company. RT cores are thankfully very close, so we should see some decent performance in ray-traced situations from both these competitors.

The lack of Tensor cores from the AMD 6000 lines also continues in this line of intentional design differences, with AMD choosing the software-based FSR to NVIDIA’s DLSS which requires this additional hardware.

The 6900’s decently higher boost clock should also help make up for a bit of the core gap as well. However, the 3090 packs a whopping 24GB of GDDR6X compared to the 6900 XT’s 16 GB making NVIDIA the probable best choice for super high resolutions.

Gaming Performance

Alright, let’s stop theorizing over numbers on a manufacturer’s chart. Now it’s time to take a look at how these two cards do in some real gaming situations.

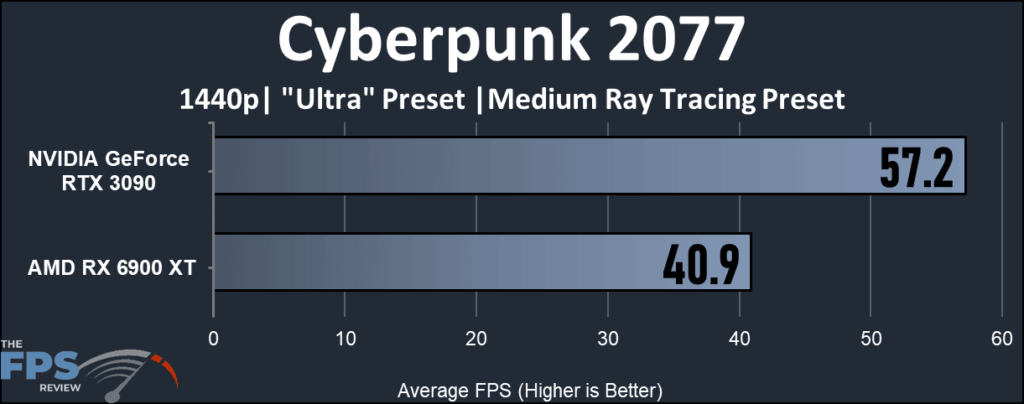

Though there are some obvious performance differences, the biggest gaps we see have to do with games that are compatible with DLSS and not FSR. In Cyberpunk at 4k with ray tracing, we see the biggest gap, with the 6900 XT becoming quite unplayable without DLSS to help it out.

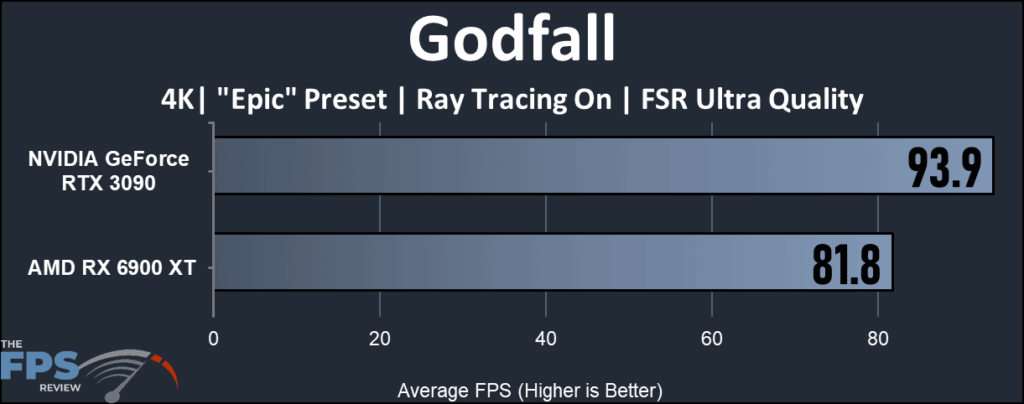

On the other hand, when we look at Godfall FSR is able to boost the performance of both cards since AMD’s software solution is compatible with any hardware. Though the 3090 wins even with that, the performance is much closer. The memory size gap may also be playing a part in the lower FPS of AMD’s cards, though it’s likely that will become more of an issue in the future as 16GB is generally considered to be plenty in most modern games.

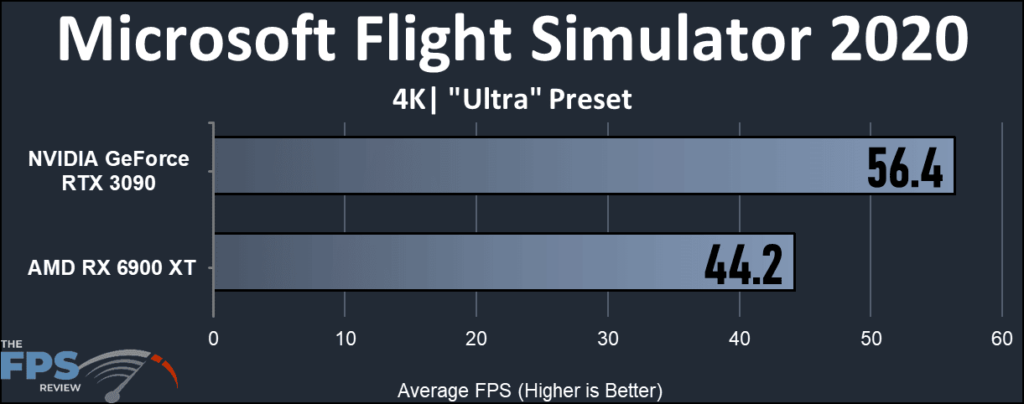

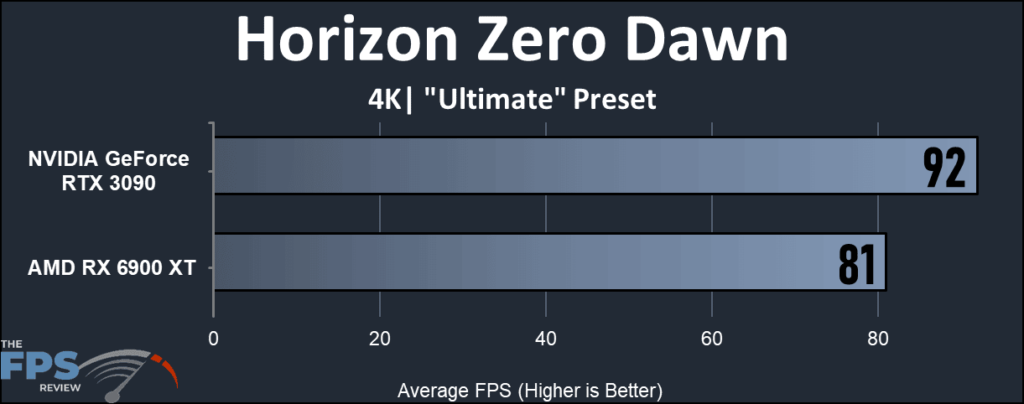

In non-DLSS or FSR situations such as with Microsoft Flight Simulator and Horizon Zero Dawn above we do see fairly solid performance out of both cards, the 6900 XT holding its own at 4K even, but the 3090 does seem to be the clear winner both with DLSS compatibility and in general performance.

Conclusion

Even though NVIDIA’s 3090 RTX has an obvious hardware advantage, both on paper and in-game, it is worthwhile to note that the largest gaps in performance we see tend to come from whether a game is DLSS only or also supports FSR. With more FSR compatibility we really could see the RX 6900 XT encroach on some of the 3090’s performance here.

However, the reality is that until FSR is more standardized in games, the 6900 XT will not be able to give you as good of performance on the highest of settings at the highest resolutions as NVIDIA’s cards. This is especially true in DLSS-compatible games where we see NVIDIA just run away with the victory.

FAQ

Will these cards work with my PCIe 3.0 Slot?

Both the 6900 XT and the 3090 RX are backward compatible with PCIe 3.0. There may be some very minor variations, but they’re built with the intention to work with either otherwise Intel systems would be at a significant disadvantage for the current generation. This may change in the coming years, but for now, either works just fine with most cards.

What’s an Infinity Cache?

It’s a proprietary bit of tech included with the AMD 6000 line of cards. It creates a bit of “super” memory that allows the GPU to nearly instantaneously access specific bits of data that are likely to be reused while doing a task. This reduces frame time lag issues and stuttering by making it so the card does not have to access the slower system memory over and over again, especially when compared to lower-end cards.

What’s the difference between DLSS and FSR?

Deep Learning Super Sampling (DLSS) and FidelityFX Super Resolution (FSR) are both upscaling solutions that allows a wider range of cards than ever before to experience the highest resolutions with only a minor loss in visual quality. However, how they work and who can use them are slightly different for each.

NVIDIA’s DLSS operates with an AI algorithm that is processed within the hardware itself using a technology called “Tensor Cores”. It is strictly available to only cards that have that technology installed. AMD’s FSR, on the other hand, is software-based and does not use AI, and does not require specific hardware. This means a much wider variety of cards can use, including NVIDIA, as well as previous generations.

What’s the difference between CUDA cores and Stream Processors?

They both refer to the same part of the GPU, which is the processor i.e. where most of the computational tasks occur. However, they both differ significantly on architecture with a sprinkling of trade secrets in their manufacturing. They’re difficult to compare 1:1, as they work fundamentally different from each other despite accomplishing the same task.