NVIDIA researchers have published a paper showing how AI-designed circuits have helped to decrease the size of an GPU arithmetic circuit by 25%. They used a reinforced deep learning agent called PrefixRL that shows how AI can be used to create new designs from scratch that are smaller, faster, and more efficient than those made using current electronic design automation (EDA) tools.

As Moore’s law slows down, it becomes increasingly important to develop other techniques that improve the performance of a chip at the same technology process node. Our approach uses AI to design smaller, faster, and more efficient circuits to deliver more performance with each chip generation.

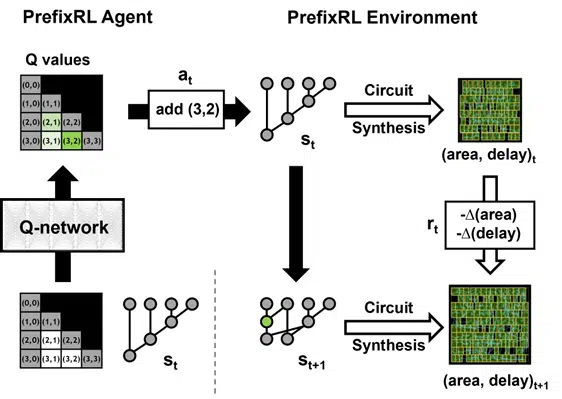

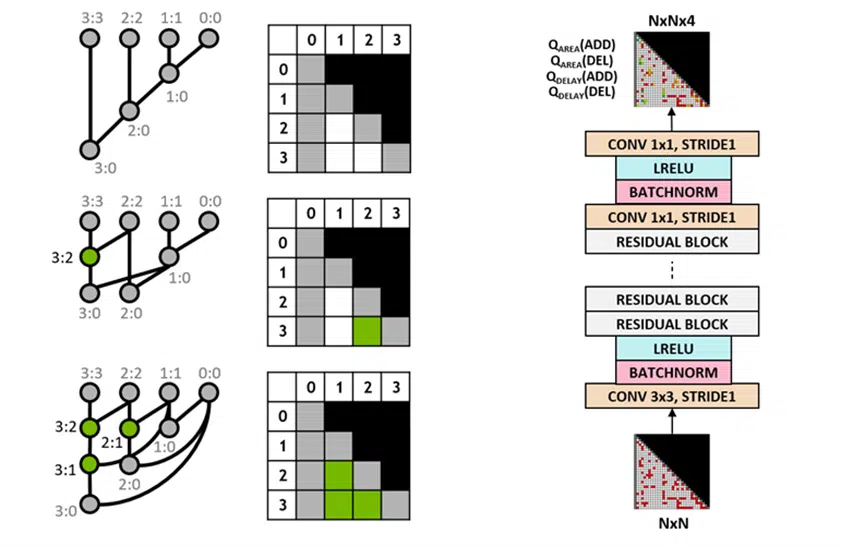

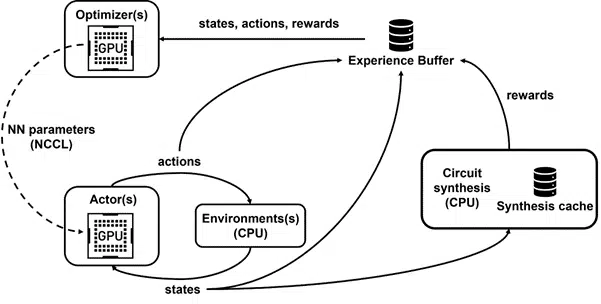

The paper from researchers Rajarshi Roy, Jonathan Raiman, and Saad Godil, states that the Hopper GPU architecture already has almost 13,000 AI-designed circuits. Their research focuses on the circuit and delay area with an emphasis on arithmetic circuits called parallel prefix circuits which are found inside GPUs. They developed an in-house tool called Raptor to use 256 CPUs that trained GPUs for over 32,000 GPU hours. The goal was to show how PrefixRL could optimize designs that normal methods could not. Optimizations included adding or removing nodes from the prefix graph, generating a new circuit, using a physical synthesis tool, and then measuring the area and delay properties.

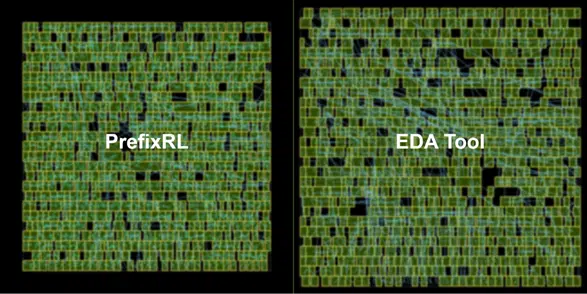

The best PrefixRL adder achieved a 25% lower area than the EDA tool adder at the same delay. These prefix graphs that map to Pareto optimal adder circuits after physical synthesis optimizations have irregular structures.

To the best of our knowledge, this is the first method using a deep reinforcement learning agent to design arithmetic circuits. We hope that this method can be a blueprint for applying AI to real-world circuit design problems: constructing action spaces, state representations, RL agent models, optimizing for multiple competing objectives, and overcoming slow reward computation processes such as physical synthesis.

Source: NVIDIA