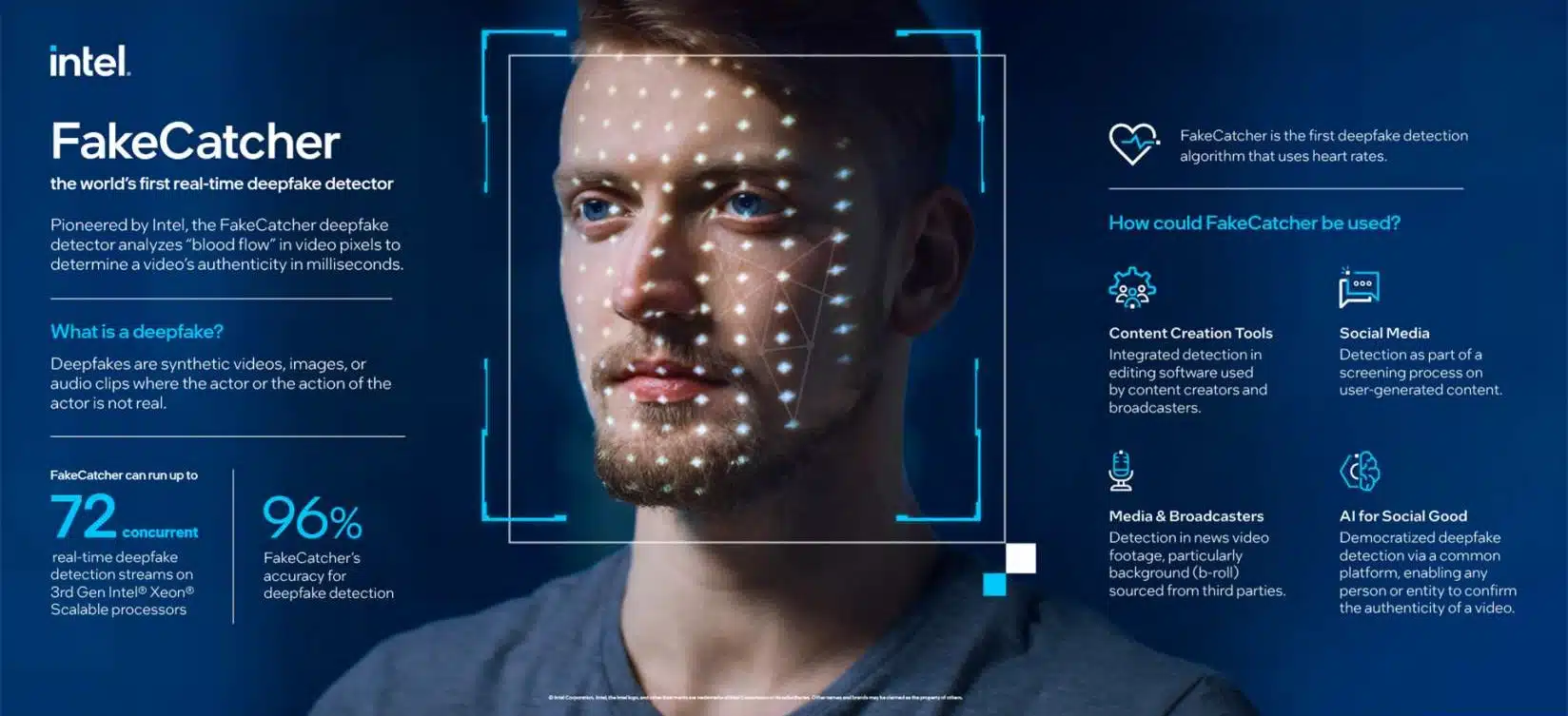

Intel has announced its FakeCatcher technology, a new real-time AI-powered web-based platform deepfake detector. The new tool is a part of Intel’s Responsible AI work initiative aimed at tackling deceptive content in media. Intel states that deepfake videos are costing companies $188 billion for cybersecurity solutions. FakeCatcher can be used with social media, global news outlets, content creation, and nonprofit organizations, to prevent the uploading or spreading of deepfake videos.

FakeCatcher works by using hardware and software to look for cues of inauthenticity in real time. It uses algorithms to analyze raw data, such as biometric data like veins changing color from blood flow, to verify discrepancies in the subject of a video. Intel has said that the technology is up to 96% accurate in its detection. A short video explaining FakeCatcher can be viewed here in its press release.

“Deepfake videos are everywhere now. You have probably already seen them; videos of celebrities doing or saying things they never actually did.”

–Ilke Demir, senior staff research scientist in Intel Labs

From FakeCatcher Press Release

“Intel’s real-time deepfake detection uses Intel hardware and software and runs on a server and interfaces through a web-based platform. On the software side, an orchestra of specialist tools to form the optimized FakeCatcher architecture. Teams used OpenVino™ to run AI models for face and landmark detection algorithms. Computer vision blocks were optimized with Intel® Integrated Performance Primitives (a multi-threaded software library) and OpenCV (a toolkit for processing real-time images and videos), while inference blocks were optimized with Intel® Deep Learning Boost and with Intel® Advanced Vector Extensions 512, and media blocks were optimized with Intel® Advanced Vector Extensions 2. Teams also leaned on the Open Visual Cloud project to provide an integrated software stack for the Intel® Xeon® Scalable processor family. On the hardware side, the real-time detection platform can run up to 72 different detection streams simultaneously on 3rd Gen Intel® Xeon® Scalable processors.

Most deep learning-based detectors look at raw data to try to find signs of inauthenticity and identify what is wrong with a video. In contrast, FakeCatcher looks for authentic clues in real videos, by assessing what makes us human— subtle “blood flow” in the pixels of a video. When our hearts pump blood, our veins change color. These blood flow signals are collected from all over the face and algorithms translate these signals into spatiotemporal maps. Then, using deep learning, we can instantly detect whether a video is real or fake.“