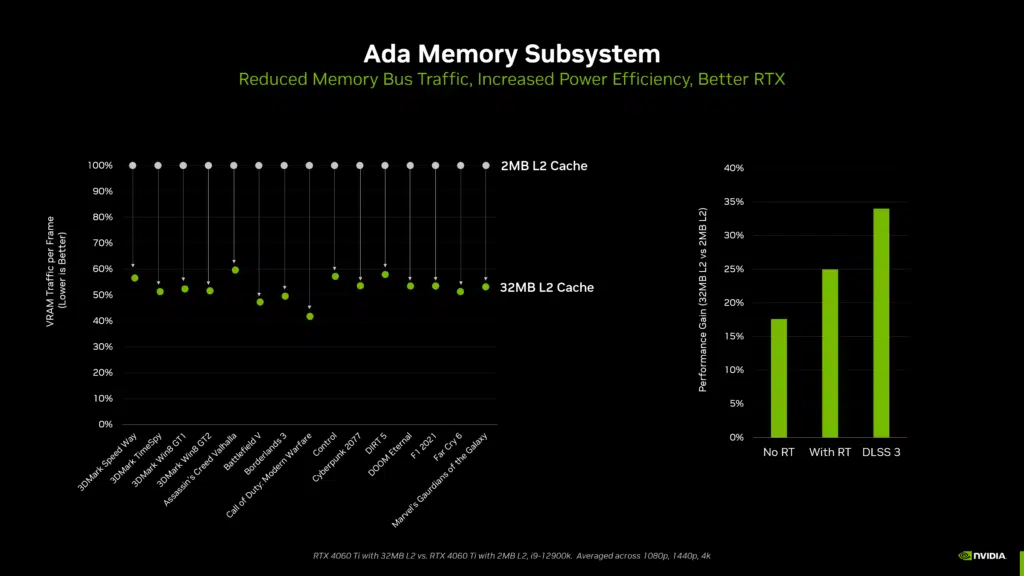

NVIDIA has shared a lengthy article that was seemingly prompted by all of the critics out there who believe the company isn’t putting enough VRAM into its GeForce graphics cards despite growing costs of ownership. The feature, titled “A Deeper Look At VRAM On GeForce RTX 40 Series Graphics Cards,” begins with a section that attempts to explain the importance of memory caches, and while that looks interesting enough, there’s another section where NVIDIA stresses how the amount of VRAM is dependent on GPU architecture, with one notable quote being “higher capacity chips cost more to make, so a balance is required to optimize prices.” AMD will presumably respond with its own long-winded article about the amount of VRAM in their Radeon cards and how they compare to NVIDIA’s options.

From an NVIDIA GeForce post:

Gamers often wonder why a graphics card has a certain amount of VRAM.

Current-generation GDDR6X and GDDR6 memory is supplied in densities of 8Gb (1GB of data) and 16Gb (2GB of data) per chip. Each memory chip can use either two separate 16-bit channels to connect to a single 32-bit memory controller, or two 8-bit channels, so two memory chips can connect to a single 32-bit memory controller. This allows a 128-bit GPU to support either 4 memory chips or 8 memory chips.

Higher capacity chips cost more to make, so a balance is required to optimize prices.

On our new 128-bit memory bus GeForce RTX 4060 Ti GPUs, the 8GB model uses four 16Gb GDDR6 memory chips, and the 16GB model uses eight 16Gb chips. Mixing densities isn’t possible, preventing the creation of a 12GB model, for example. That’s also why the GeForce RTX 4060 Ti has an option with more memory (16GB) than the GeForce RTX 4070 Ti and 4070, which have 192-bit memory interfaces and therefore 12GB of VRAM.

Our 60-class GPUs have been carefully crafted to deliver the optimum combination of performance, price, and power efficiency, which is why we chose a 128-bit memory interface.

In short, higher capacity GPUs of the same bus width always have double the memory.