Power and Temperature

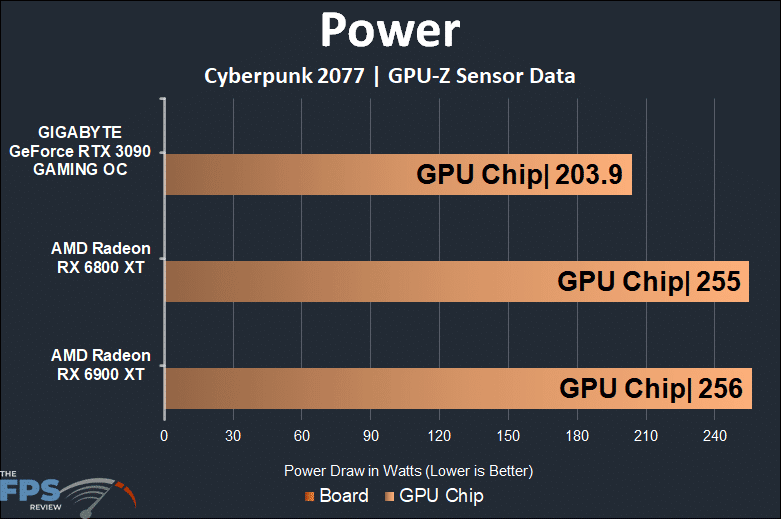

To test the power and temperature we perform a manual run-through in Cyberpunk 2077 at “Ultra” settings for real-world in-game data. We use GPUz sensor data to record the results. We report on the GPUz sensor data for “Board Power” and “GPU Chip Power” when available for our Wattage data.

The AMD GPUs do not report Board Power in GPU-Z, so we look and compare the GPU Chip Power reading only. In terms of GPU Chip Power the AMD Radeon RX 6900 XT does pull the most Wattage at 256W, but it is only just a bit higher than the AMD Radeon RX 6800 XT. It’s just a 1W difference. This seems to follow the claims from AMD that the TDP of 300W for both the Radeon RX 6800 XT and RX 6900 XT is true, the RX 6900 XT does not consume a lot more power at all.

Relatively speaking though, the GIGABYTE RTX 3090 uses much less GPU Chip Power while doing its business, about 52W difference. For the record, the Board Power on the GIGABYTE RTX 3090 is 368.8W.

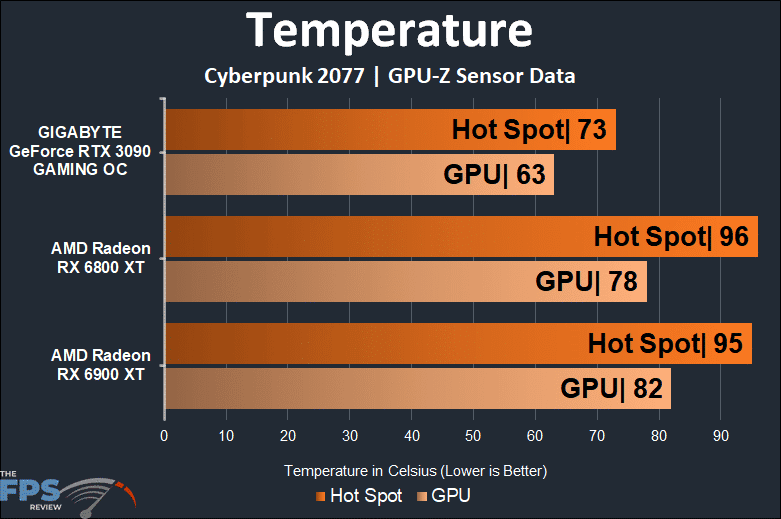

On the temperature, we report both the GPU (edge temp) and Hot Spot (Juncture temp.) Keep in mind that the GIGABYTE GeForce RTX 3090 GAMING OC is using a custom-built by GIGABYTE cooler, the two AMD video cards are using the built by AMD reference cooler.

The AMD Radeon RX 6900 XT gets just as hot as the Radeon RX 6800 XT on the Hot Spot temperature. However, the edge temperature is about 4 degrees hotter on the Radeon RX 6900 XT, which makes sense considering it has more shaders and does more work. Compared to the GIGABYTE card it runs much cooler, but it is a custom-built GIGABYTE card.

The temperatures are not concerning, the fan speed remained very quiet during operation. We do wonder how hot the memory is running, but seeing as it is GDDR6 and not 6X it shouldn’t be as hot as the RTX 3090’s GDDR6X memory.