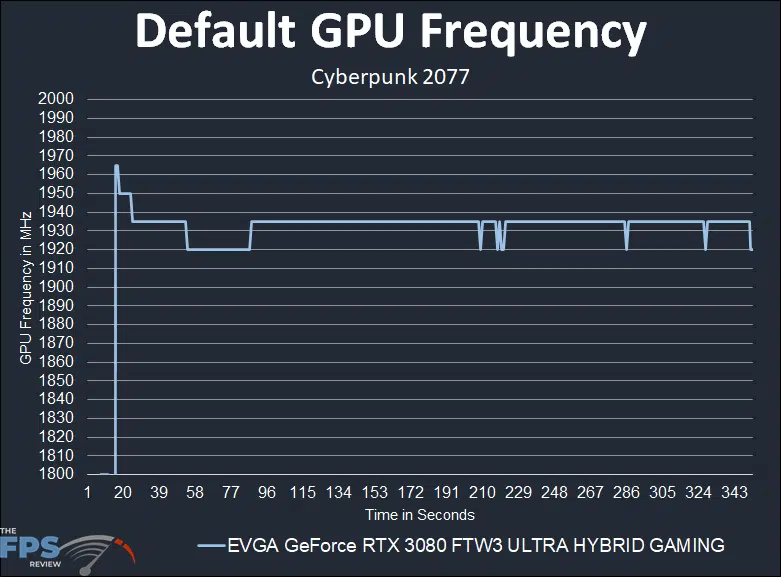

Default GPU Frequency

Before we look at performance, we need to find out the actual real-world gaming frequency the video card performs. With both NVIDIA and AMD GPUs today, the GPU frequency is very dynamic. What may be quoted as the “Boost Clock” is not necessarily the performance it will actually run at. Typically, GPUs today can exceed the “Boost Clock” dynamically. We need to find out what it actually runs at, in this way we can see how well things like cooling and power headroom are working.

To do this we will record the GPU clock frequency over time while playing a game. We use Cyberpunk 2077 for this with a very long manual run-through at “Ultra” settings. We also record GPU-Z sensor data to look at GPU temperature, Voltage, and Power.

The default GPU Boost clock on this video card is 1800MHz. It appears that while gaming the actual real-world in-game frequency seems to mostly be around 1935MHz while gaming. It remains very solid and consistent, meaning the excellent cooling on the GPU is keeping it consistent. This is a very high frequency that operates 135MHz over the Boost clock and is compared to a Founders Edition 225MHz over the boost clock. In our testing of the Founders Edition, it boosted to around 1837MHz, so at 1935MHz this is certainly boosting a lot higher than the Founders Edition would.

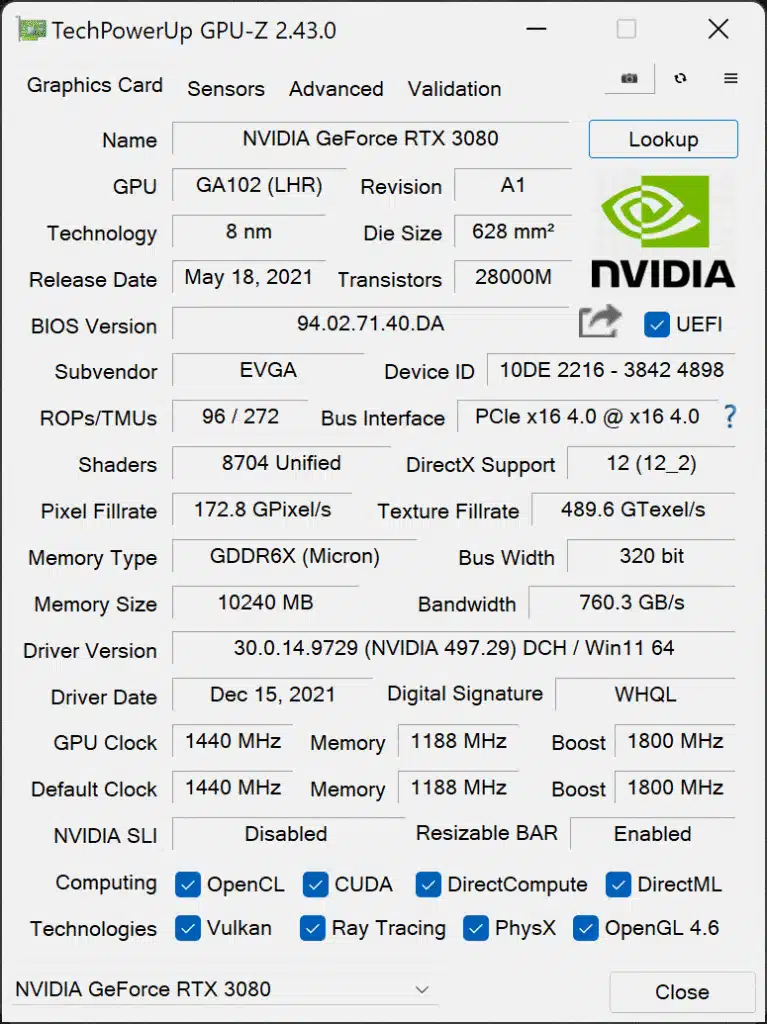

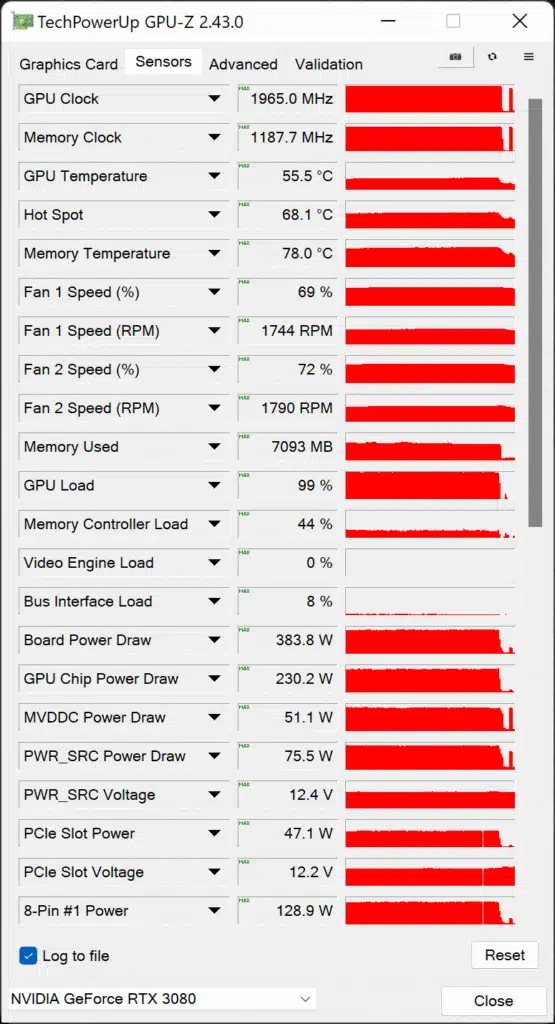

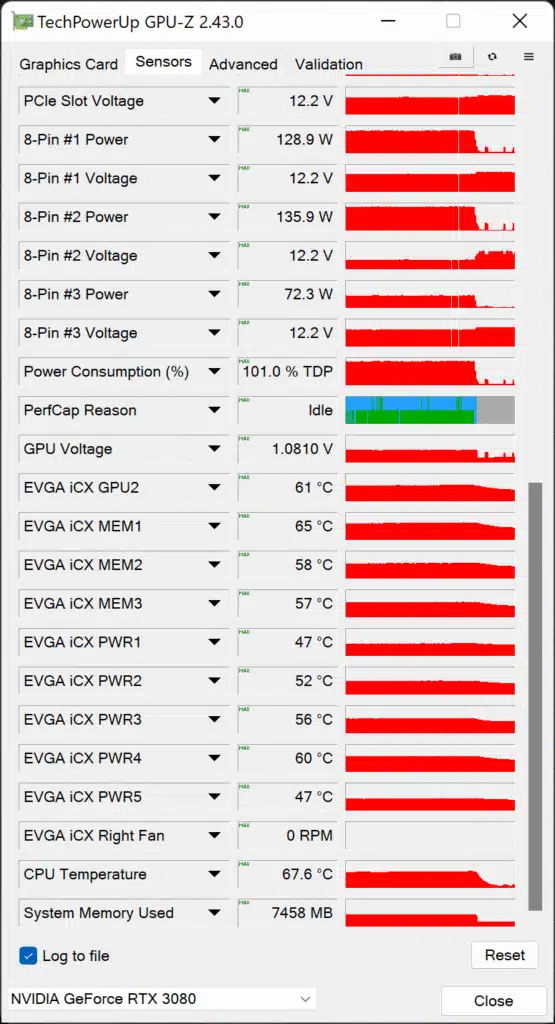

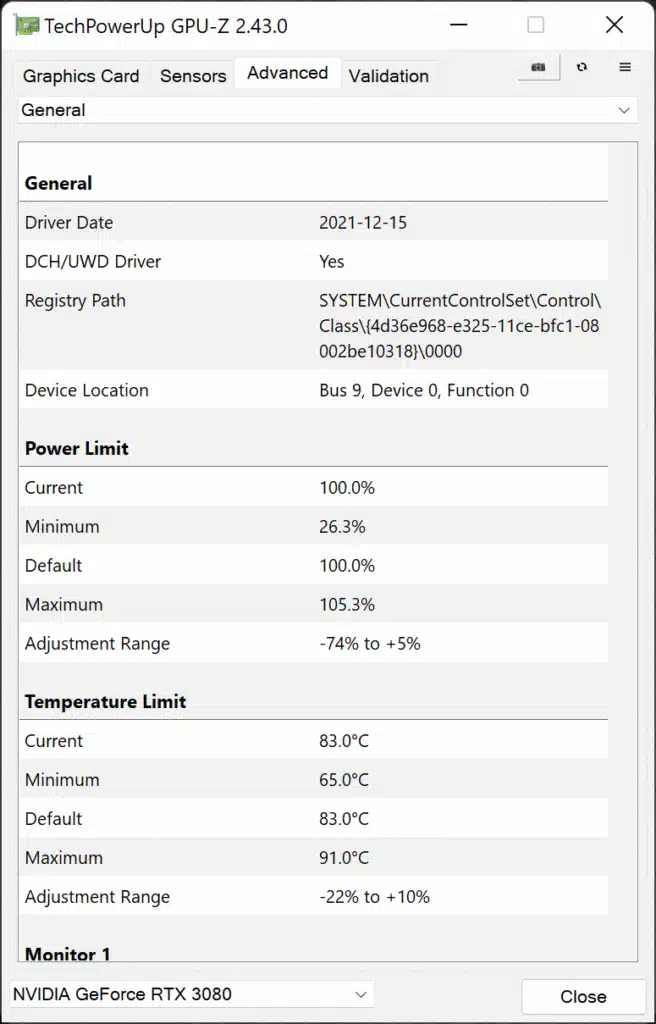

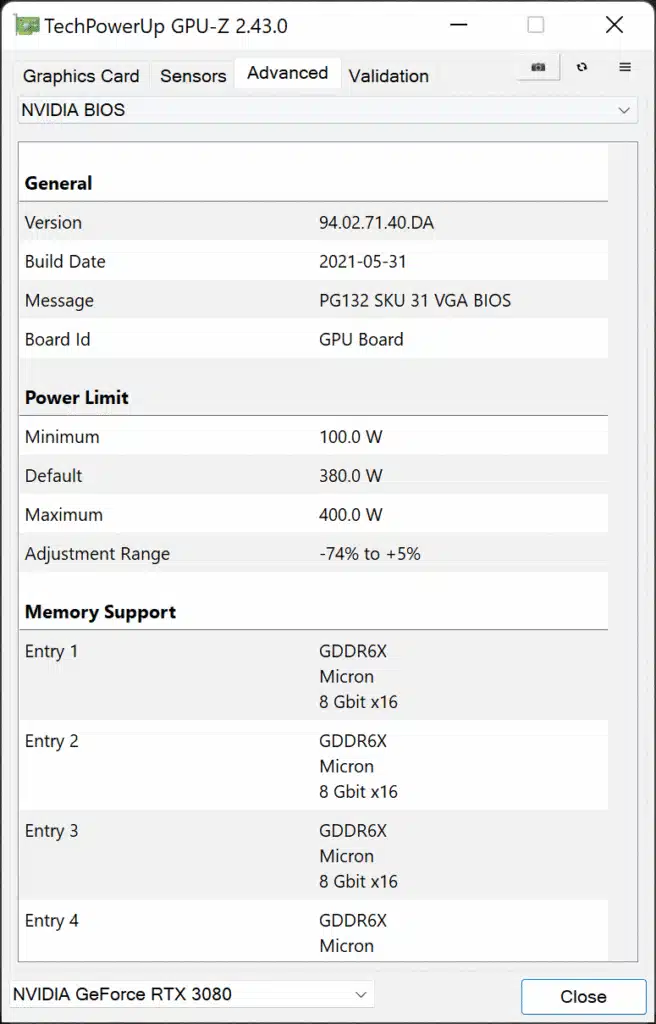

GPUz

GPUz shows it maxing out at 1965MHz and 55.5c on the GPU Temperature with 68.1c GPU Hot Spot. The memory temperature hit 78c and the fan speeds were around 70%. The Board Power Draw was 383W and the GPU Voltage was at 1.0810V. As you can also see in the GPUz Sensor Data this video card has multiple EVGA iCX monitoring points for temperature. That monitoring is what really makes this card very enthusiast. You can see multiple memory and power and VRM temperatures throughout the card. It seems components are kept well cooled.