Peformance Testing & Methodology

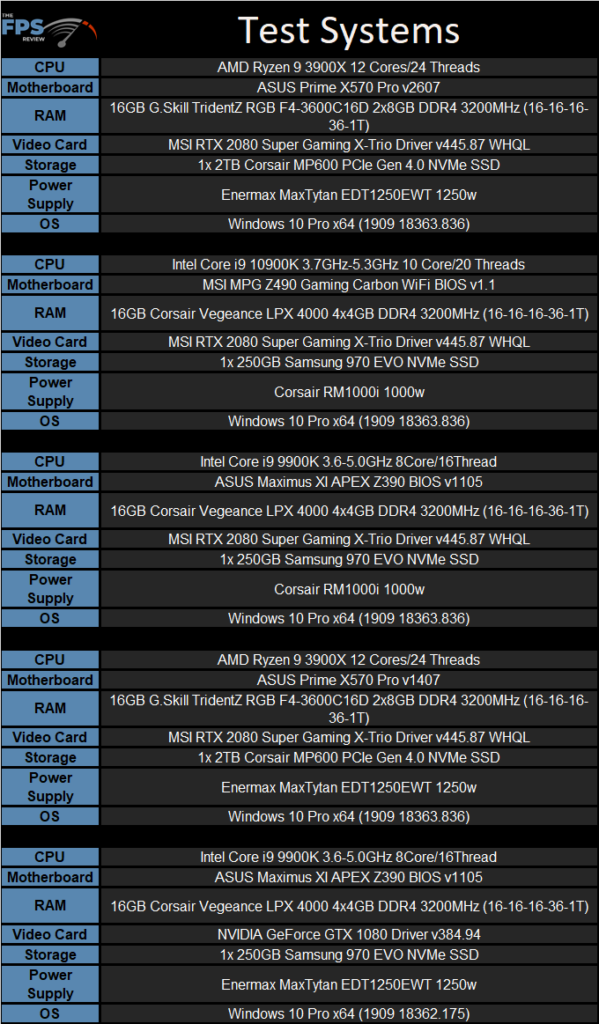

For general performance testing, we use the same basic test setup for both CPU and motherboard evaluation making the results comparable. Each test is run multiple times to ensure accuracy. The middle result is used in each case. The following system configurations were used for all benchmarks and general testing.

Due to potential scheduler improvements with Windows 10 that have happened over time as well as other tweaks we are using the latest build available at the time of this writing. For reference, the current Windows 10 build is 2004. We are using Windows 10 Professional for reference. All the latest patches have been applied and the driver versions are noted in the specifications. These are not necessarily the newest as we want the game performance to be more consistent across a broader sample of system configurations. We will update these periodically and retest as needed.

All systems were freshly formatted, and all the latest drivers and OS patches were used. All the systems were updated to their latest BIOS revisions. Finally, for the Intel system, I did install the CPU microcode updates relevant to that CPU. It’s important to note that build 1909 does contain improved mitigations for several security flaws on Intel processors. However, I did not go out of my way to download any additional or optional mitigation patches. Hyperthreading (SMT for AMD) also remained enabled for all testing.

We are using the performance power plan on all our test configurations. Essentially, we created a “best case” scenario for each system outside of the hardware configurations. For the hardware, it was impossible to use the same memory modules on all the test systems due to the nature of memory compatibility on different motherboards. That said, we were able to use common frequencies and keep the timings relatively close for the most part. The memory timings and the speeds we used are referenced in the specification tables above.

One thing to keep in mind is that performance is largely determined by CPU and memory configuration, or that of the graphics card rather than the motherboard itself. The benchmarks here ensure that the motherboard was able to hold up to the testing and that there were no problems with firmware, thermals, or power delivery, which caused performance to drop off. It’s also worth noting that overclocked results are provided to not only showcase what the motherboard can do when pushed but that it was able to sustain the necessary power delivery to make it through the torture testing another time with greater demand placed on it than that of stock operation.

Finally, systems were run at stock and overclocked values. For clarification: “Stock” settings are their automatic or default values in BIOS which allows the CPU’s tested to operate using their default base and boost clocks. Overclocked values provided are all core overclocks unless otherwise noted. These clock speeds are the maximum 24/7 stable overclock we could achieve for each configuration. AVX offsets are not used unless stated. Results, where clock speeds are not explicitly shown, are “stock” values. These are checked to ensure that boost clock behavior is normal and that temperatures are in the correct ranges to avoid throttling and performance anomalies as much as possible.