Quality Settings Performance

Now that we have looked at performance comparing video cards to each other, let’s look at performance from another perspective. Let’s compare the graphics settings themselves in the game and see how demanding each one is over the other. For this we are just going to use two video cards, the AMD Radeon RX 5700 XT and the NVIDIA GeForce RTX 2080 FE at 1440p. We will compare graphics settings from “Ultra Nightmare” down to “Low.”

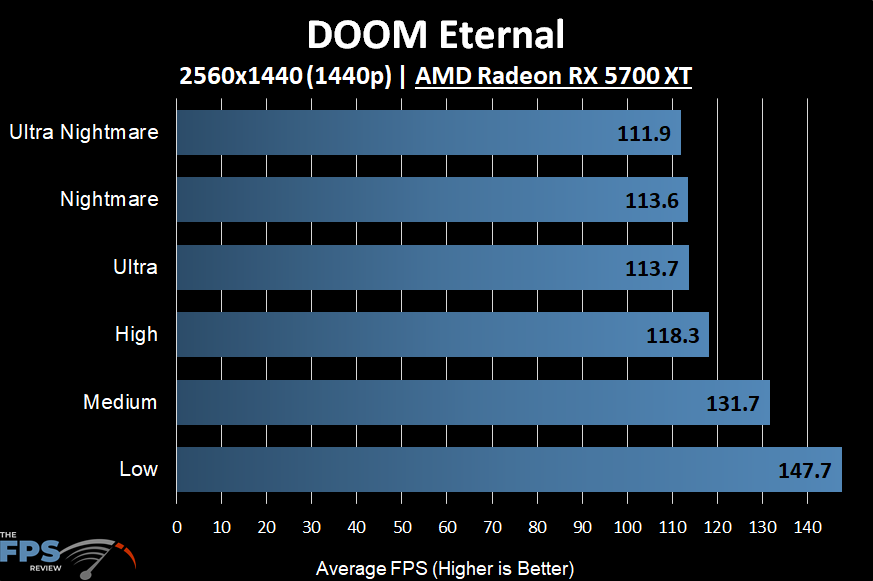

AMD

In this first graph we are looking at the AMD Radeon RX 5700 XT across all graphics settings at 1440p. The first thing we notice is that we really don’t see a big impact on performance till we get down to the “High” and “Medium” and “Low” settings. There actually doesn’t seem to be big differences between “Ultra” and “Nightmare” and “Ultra Nightmare.” It seems the move from “High” to “Ultra” has a bump, and between “High” and “Medium” there’s a big difference.

Overall, from Low to Ultra Nightmare there’s a 32% difference in performance. Therefore, that can make a big playable difference depending on the resolution and video card. For this one, the Radeon RX 5700 XT handles everything at 1440p with no problem.

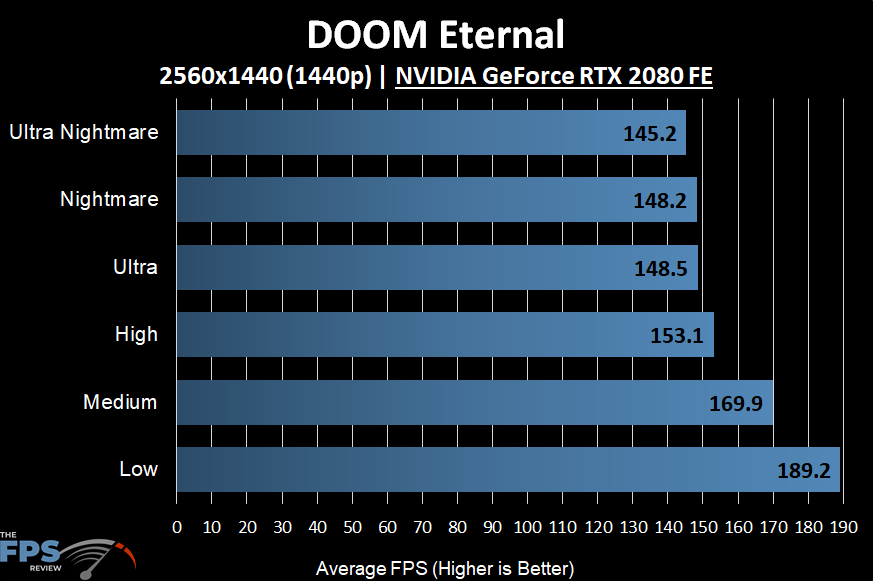

NVIDIA

The NVIDIA GeForce RTX 2080 FE looks about the same compared to the Radeon RX 5700 XT in pattern. There doesn’t seem to be any difference (or barely perceivable) between “Nightmare” and “Ultra” settings. Then we see a drop between “Ultra” and “High” and another between “High” and “Medium.”

From Low to Ultra Nightmare is a 30% difference in performance. This is also very close to the Radeon RX 5700 XT which was 32%. It appears no matter AMD or NVIDIA both have the same difference in performance between the quality settings. There doesn’t seem to be any advantage for one over the other, other than the obvious positive scaling NVIDIA GPUs seem to have in this game compared to AMD GPUs.