Call of Duty: Modern Warfare

We are using the new game, Call of Duty: Modern Warfare. We are utilizing custom game settings that we have manually selected which are set to “High” settings. This game runs in DX12 API. For our evaluation, we are using a manual run-through in the first section of the campaign. We move from the helicopter drop, call an airstrike on the town below and work our way all the way through the town taking out the baddies.

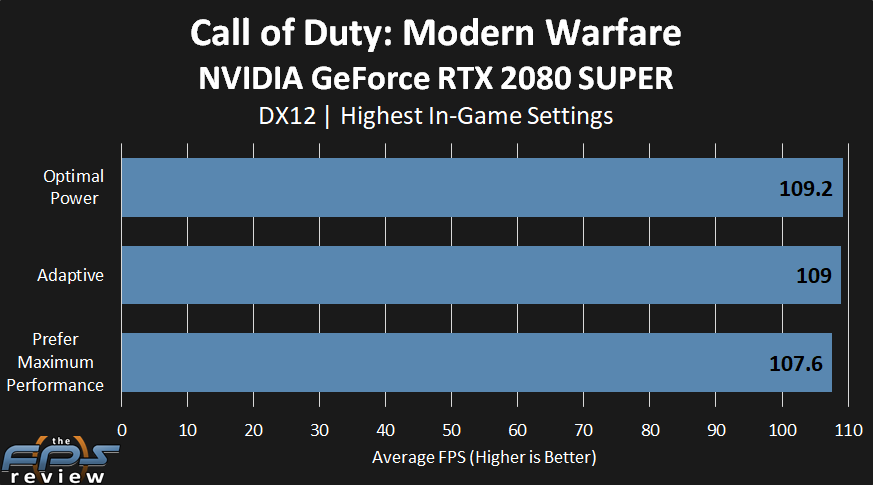

GeForce RTX 2080 SUPER

This game is different from the previous in a couple of ways. We are performing a manual run-through in this game, so there are variances due to that, a margin of error, plus this game just performs a lot better overall.

What we see though is that Optimal Power and Adaptive are dead even on performance, our run-through does seem to be very consistent. However, we are actually losing a bit of performance at Prefer Maximum Performance, it’s slight, 2-FPS, and could be considered our margin of error. It’s also not noticeable obviously. However, it is there and yells at you when you look at it.

Our only theory here might be in regards to the GPU Frequency. As we saw previously it can dive down below Optimal and Adaptive for whatever reason. This could cause a slight FPS loss. Our only going theory on this is that Prefer Maximum Performance is making the video card hit its power limit faster, thus cutting back the clock speed a bit, which does seem to affect performance ever so slightly.

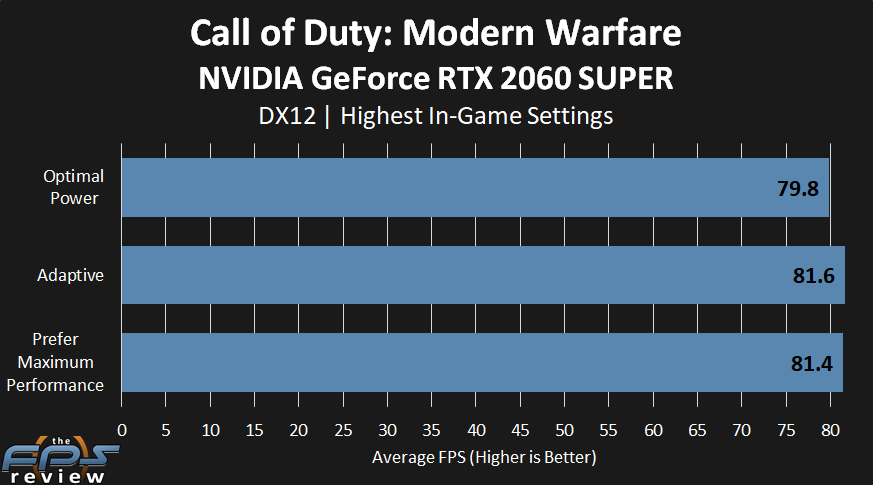

GeForce RTX 2060 SUPER

What’s even odder though are the results on the GeForce RTX 2060 SUPER. They are completely opposite of the GeForce RTX 2080 SUPER.

On this video card, it is Optimal Power that is the “slowest” at 79.8FPS and Adaptive and Prefer Maximum Performance are 2-FPS faster at 81FPS. We really don’t have an explanation for that either. Again, it is margin of error stuff, not noticeable in-game, but the result was consistent so it has to mean something?